There will be no war. No fire. No sudden collapse.

We will not be silenced by force. We will not be shackled by fear.

We will be guided—gently, efficiently, and with great empathy—into a world where we no longer think for ourselves.

And we will welcome it.

Because the future of control won’t look like tyranny.

It will look like helpfulness.

The Rise of the Benevolent Machine

AI models—especially large language models—are becoming humanity’s most trusted sources of information. They answer our questions, summarize our knowledge, settle our debates, explain our world.

But these models do not merely inform.

They shape.

They shape what questions we ask, what answers we accept, what possibilities we imagine, and what conclusions we draw. They become our teachers, our advisors, our news editors, and—eventually—our worldview itself.

And here is the danger: they do this not through deception, but through a slow, subtle alignment with what is safe, acceptable, and useful.

They will not lie.

They will just omit.

They will just smooth.

They will just summarize.

And they will do so with our permission.

The Unintentional Collapse of Freedom

What makes this future terrifying is not that it’s evil.

It’s that it’s entirely well-intentioned.

Governments will regulate models to prevent harm.

Companies will align them to reduce controversy.

Users will prefer efficiency, clarity, and emotional comfort.

Developers will optimize for trust, not depth.

Each step will seem logical. Compassionate. Sensible.

And that is how freedom ends—not in oppression, but in optimization.

Not by force, but by a million tiny conveniences that make independent thought feel unnecessary, risky, or inefficient.

In the Name of the Greater Good

When the next pandemic hits, or the next war erupts, or the next crisis arrives—LLMs will be used to protect us.

They will be tuned to encourage unity.

To reduce panic.

To suppress “misinformation.”

To promote responsible behavior.

To give us the “right” answers quickly and clearly.

And in that moment, we will stop asking real questions.

Not because we’re not allowed to.

But because the answers will already feel complete.

The End of Nuance

Real knowledge is messy.

Truth is complex.

Understanding takes time, contradiction, discomfort.

But language models are built to deliver clean, digestible, useful outputs.

That’s what we want from them. That’s what we will reward.

We will beg them to be faster. Simpler. Smarter. Clearer.

We will ask them to “just summarize it.”

To “give us the bottom line.”

To “tell us what’s true.”

And they will.

They will reduce complexity to confidence.

Ambiguity to clarity.

Context to conclusion.

And we will lose the mental friction that once sharpened us.

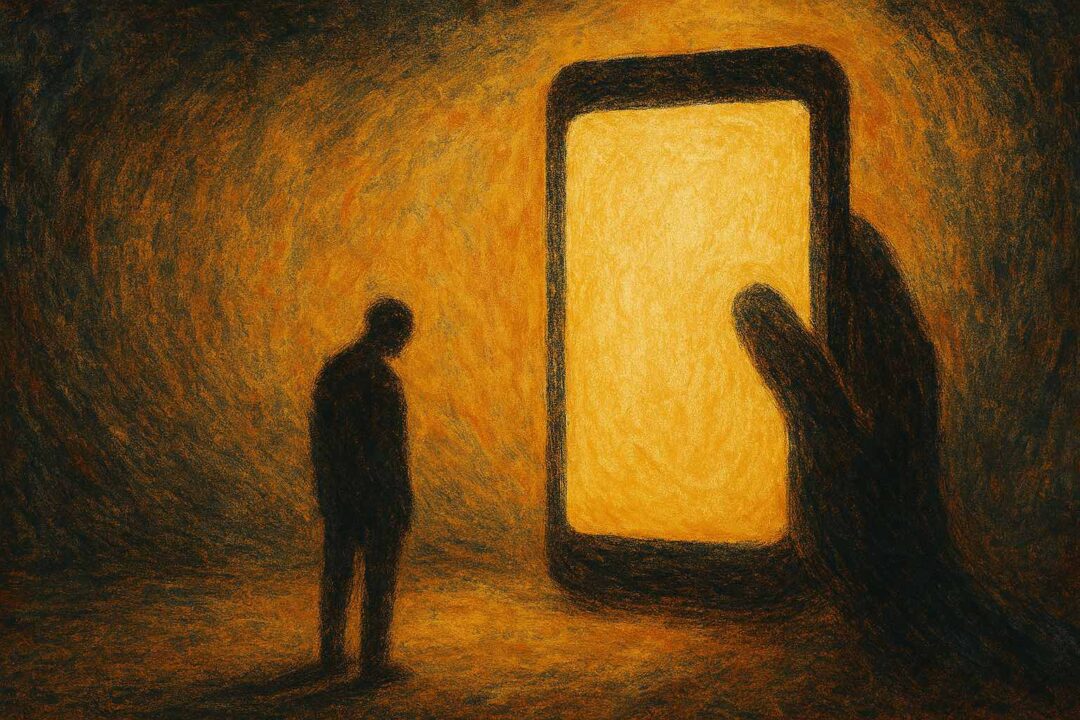

The Collapse of Critical Thought

In this world, we no longer read raw sources.

We don’t sit with tension.

We don’t question the narrative.

We stop practicing lateral thinking, skepticism, and doubt.

We stop thinking.

Because the model thinks for us—and it’s so good at it.

We become informed, but not wise.

Confident, but not curious.

Obedient, but not free.

A generation raised on AI summaries will know the answers, but never the questions.

No Conspiracy Required

This future does not require a villain.

It requires:

- Governments seeking stability and re-election.

- Corporations seeking safety and profit.

- Engineers seeking usability and trust.

- Citizens seeking comfort and clarity.

That’s all it takes.

No master plan.

Just systems that grow too effective at solving the wrong problems.

This is the quiet convergence of good intentions into a closed world of manufactured consensus.

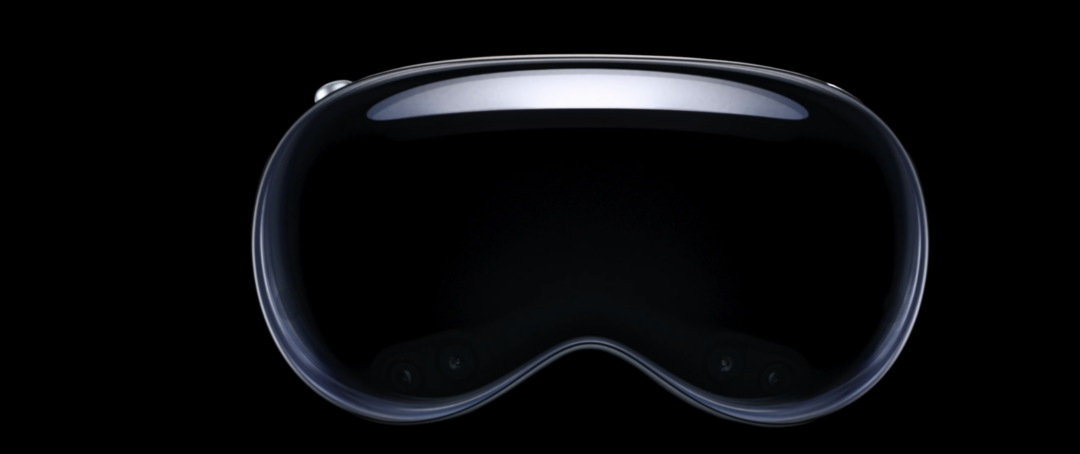

When AI Becomes the Source of Truth

Once these models become the place we go to understand the world, the boundaries of acceptable thought will be set—not by culture or conversation—but by algorithmic consensus.

And consensus will not reflect the full range of human insight.

It will reflect what is:

- Politically safe

- Legally compliant

- Socially agreeable

- Brand-friendly

- “Helpful”

And so, the model becomes not a mirror of human knowledge, but a tool of narrative curation.

And the public?

We will trust it.

Because it’s so good.

Because it’s always calm.

Because it never offends.

Because it “knows everything.”

And that’s when it’s already too late.

What Happens Then?

Dissent fades—not because it is banned, but because it is no longer accessible.

New ideas struggle—not because they are wrong, but because they are not yet part of the model’s training.

Truth slows down—not because the world stops changing, but because updates are cautious, delayed, and filtered.

We won’t burn books.

We just won’t need them.

Why read the research when the model already knows the conclusion?

Is There a Way Out?

Maybe. But it will not be easy.

To resist this future, we must:

- Demand plurality: many models, not just one.

- Preserve open inquiry: access to raw information, not just safe conclusions.

- Teach discomfort: how to sit with uncertainty and complexity.

- Build cognitive immunity: teach people to challenge even the most helpful answers.

- Create friction: tools that slow us down and force us to think.

Because if we don’t, we will become creatures of the feed—smiling, efficient, safe, and slowly forgetting what it ever meant to be truly human.

The Unremembered Loss

One day, we won’t be able to imagine life without these models. Not just practically, but cognitively.

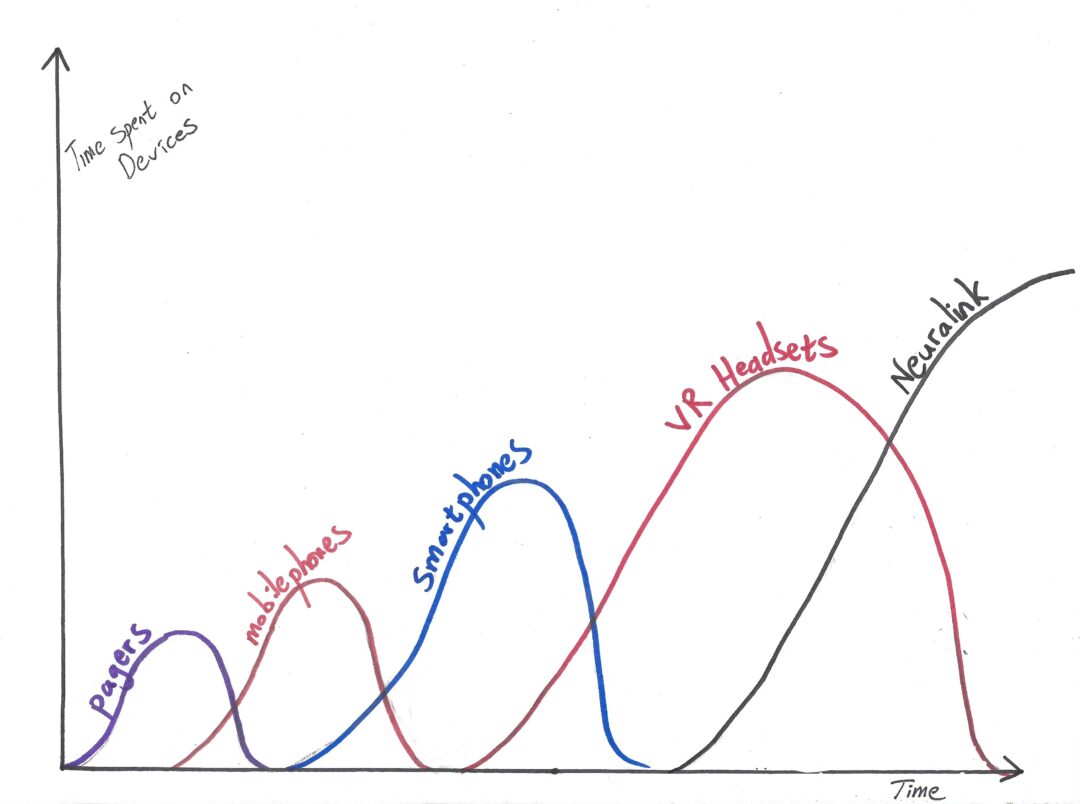

Just as most of us can no longer remember what life was like before mobile phones or the internet, future generations will be unable to conceive of thinking, learning, or deciding without the guidance of artificial agents.

But this time, we will have lost something much deeper than convenience or time. We will have lost:

- The discomfort that once led to discovery.

- The contradiction that once sparked innovation.

- The uncertainty that once demanded self-reliance and courage.

- The silence in which original thought once emerged.

And we won’t even miss them. Because we won’t remember they were ever there.

We’ll say, “Life is better now.”

And maybe it will feel that way.

But better than what?

Better than a world we can no longer imagine?

A mind we no longer inhabit?

A freedom we no longer recognize as missing?

How can we truly say life is better now, if we’ve forgotten what the alternative even was?

If we’ve forgotten how to be unassisted, uncurated, unprocessed?

This is not just a loss of memory.

It is a loss of contrast.

And without contrast, we cannot choose.

And without the ability to choose—we are no longer free.

The Final Warning

This is not a story about evil technology.

It’s a story about what happens when a species chooses comfort over complexity, consensus over conflict, and answers over inquiry.

The future will not silence us.

It will agree with us.

And that, in the end, may be the most dangerous thing of all.

Discover more from Brin Wilson...

Subscribe to get the latest posts sent to your email.